This Reads Like That - Deep Learning for Interpretable Natural Language Processing

Abstract

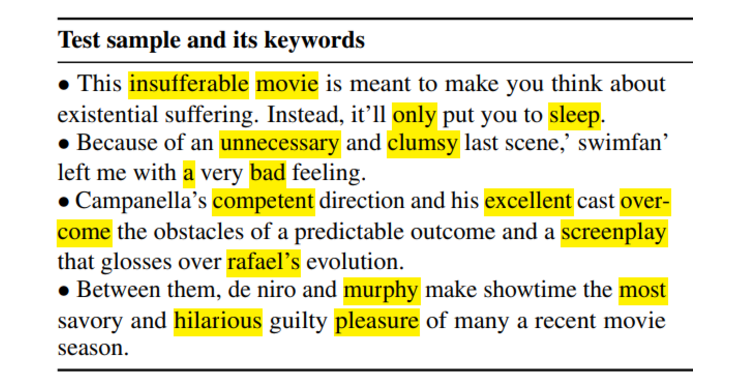

Prototype learning, a popular machine learning method for inherently interpretable decisions, leverages similarities to learned prototypes to classify new data. While mostly used in computer vision, this work extends prototype-based networks to natural language processing. It introduces a learned weighted similarity measure that focuses on informative dimensions of pre-trained sentence embeddings, and a post-hoc explainability mechanism that extracts prediction-relevant words from both prototype and input sentences. The authors show that the method improves predictive performance on AG News and RT Polarity compared with a previous prototype-based approach, while also improving explanation faithfulness against rationale-based recurrent convolutions.