Cascaded Language Models for Cost-effective Human-AI Decision-Making

Abstract

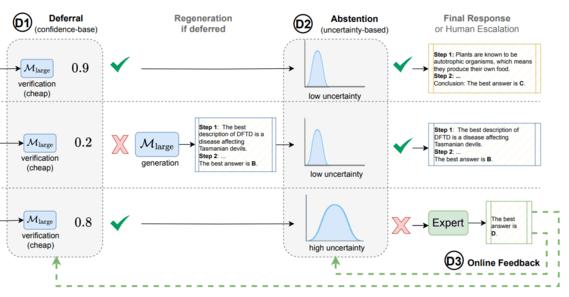

A challenge in human-AI decision-making is balancing prediction correctness, knowledge and reasoning costs, and confidence in whether to abstain or escalate to human experts. This paper presents a cascaded LLM framework that adaptively delegates tasks across three tiers: a base model, a stronger but costlier model, and a human expert when the model cascade abstains. The method uses a two-stage policy: first, a deferral policy decides whether to accept the base model's answer or regenerate with the larger model; second, an abstention policy decides whether the cascade output is certain enough or should be referred to a human. An online learning mechanism incorporates human feedback to adapt to changing task difficulty. Evaluations on ARC-Easy, ARC-Challenge, MMLU, MedQA, and MedMCQA show that the cascaded strategy improves accuracy in most settings while reducing cost and handling abstentions in a principled way.