Tiny Autoregressive Recursive Models

Abstract

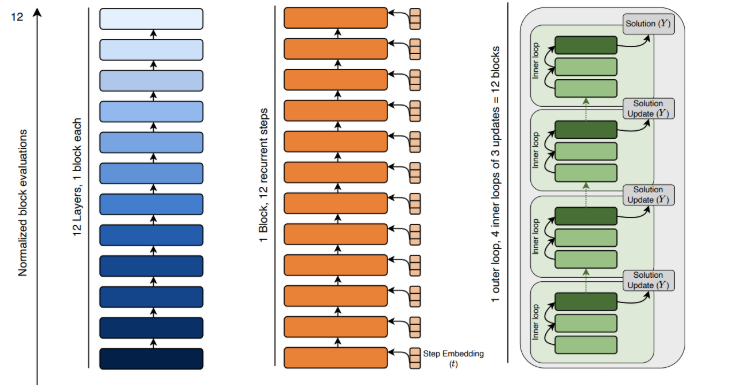

Tiny Recursive Models (TRMs) have shown strong performance on ARC-AGI via two-step refinement of an internal reasoning state and predicted output, raising the question of whether similar refinement should help autoregressive modelling. This paper proposes an Autoregressive TRM and evaluates it on small autoregressive tasks under compute-matched conditions. To isolate where gains come from, the authors design a suite of models that progressively transform a standard Transformer into a Tiny Autoregressive Recursive Model while fixing block design, token stream, and next-token objective. Across character-level algorithmic tasks, some two-level refinement baselines perform strongly, but the full Autoregressive TRM does not show reliable gains. The results suggest promise for two-step refinement mechanisms in general while cautioning against the specific autoregressive TRM architecture as a primary research direction.